Python Online Test

15 questions total, 35 minutes maximum, for mid-level programmers

Our Python test will allow you to automatically assess the aptitude of prospective candidates.

Compiled by a team of veteran programmers with years of experience, our 20-question online test covers a wide range of Python development topics. Using our test, you'll be able to determine which candidates have the best skills for the job well before you invite them to interview – it's as easy as checking your email!

We hope you'll make use of our Python quiz to streamline your interview process!

Programming test includes:

Python - 15 Questions

- Python Object-Oriented Programming (OOP)

- Namespaces, Scope and Name Binding

- Python Constructs (Generators, Iterators, Decorators, Lambda)

- Syntax and Stdlib

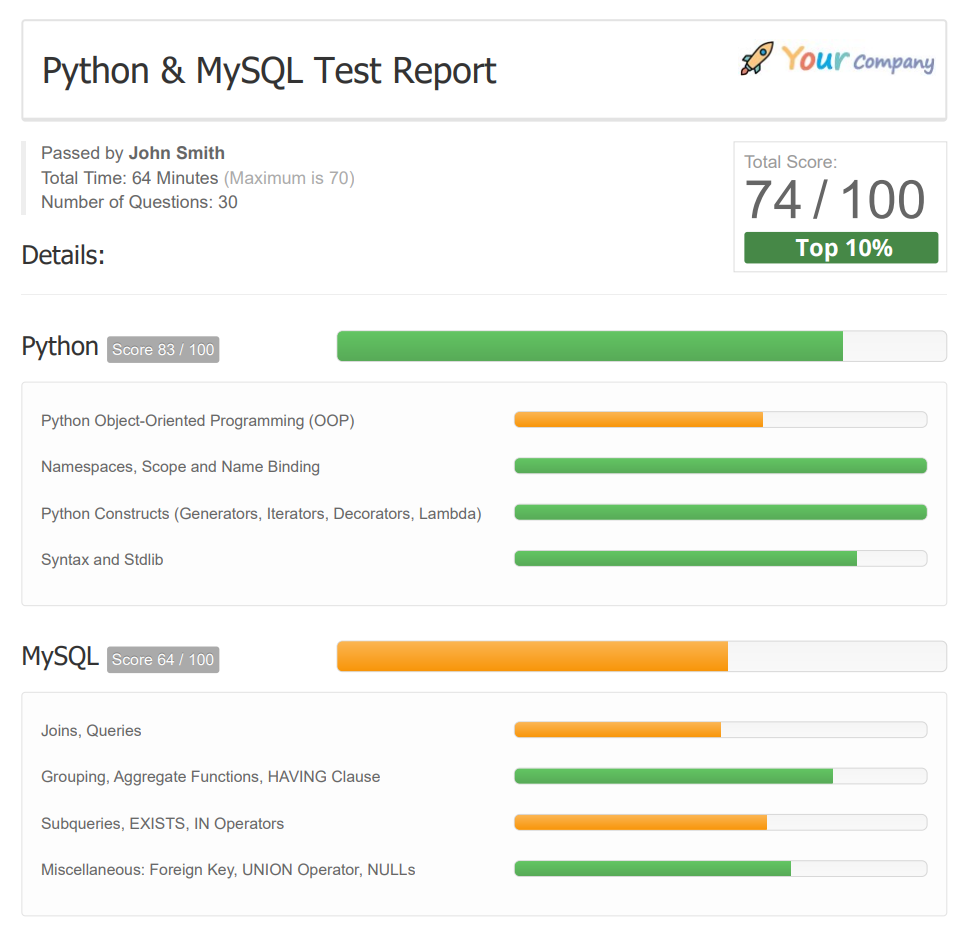

Sample Python Test Report

This sample Python test report shows what employers/recruiters receive via email after a candidate completes one of our coding tests. It includes an overall score and a detailed breakdown by specific knowledge areas, providing a clear view of a candidate's coding skills.

Reports are provided in PDF format, making them easy to read, share and print.

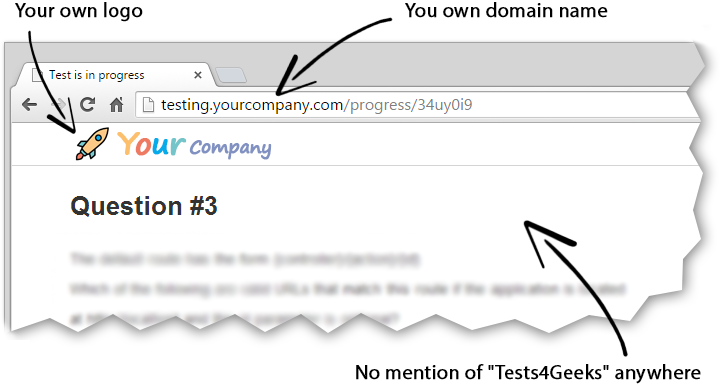

Custom Branding

Do you want the Python coding test to match your own branding?

No problem! Use your company's domain and logo without any mention of Tests4Geeks.

Your applicants will think these programming assessment tests are all yours!

"They totally blow away the competition as far as a better product value."

Maurice H. on Capterra.com

F.A.Q.

1. Does every candidate have to answer the same questions?

Yes. Python test consists of the same questions for every candidate.

In order to properly compare candidates, they need to answer questions of the same difficulty level, and different questions always mean different difficulty levels.

However, the order of questions and answers is randomized for each applicant.

2. How should I interpret the exam scores?

First of all, you need to keep in mind one very important thing:

The purpose of this Python online test is not to help you find the best developers.

Its purpose is to help you avoid the worst ones.

For example, you have 5 candidates who get scores of 35, 45, 60, 65, and 80, based on a maximum possible score of 100.

We would recommend you invite the last three (those scoring 60, 65, and 80) to a live interview, not just the one who scored an 80/100.

3. Coding Test vs. Quiz

The test is presented in a multiple-choice, or quiz, format, rather than requiring test takers to write code.

If we used a coding test instead, we would have to check all answers manually, which would obviously be impossible.

4. What skill level is the test for?

The test is primarily created for mid-level developers.

5. What about junior and senior level developers?

The test can also be used to test junior programmers, but you should reduce your acceptance score drastically to compensate.

Likewise, you can use it to test senior Python developers as well, with an increased acceptance score.

Some will argue that it's pointless to judge senior developers based on a test meant for mid-level developers. This is generally true if you're looking for specific skills in a candidate rather than a broad base of expertise.

But at the same time, anyone can claim to be a senior developer on their resume. If you're concerned that candidates might be overstating their knowledge and accomplishments, this Python skills test is a good way to determine which ones can actually deliver what they promise.